Claude Agent SDK Architecture Breakdown

- News

- Claude Code

Claude Code is easiest to misunderstand when it is treated as a smart terminal UI. Based on Anthropic’s public documentation, the more useful mental model is that Claude Code sits on top of a reusable agent runtime, and that runtime is now exposed more directly as the Claude Agent SDK. Anthropic has also said explicitly that the former Claude Code SDK was renamed to the Claude Agent SDK, and that the same underlying harness now powers the native Claude integration inside Xcode.

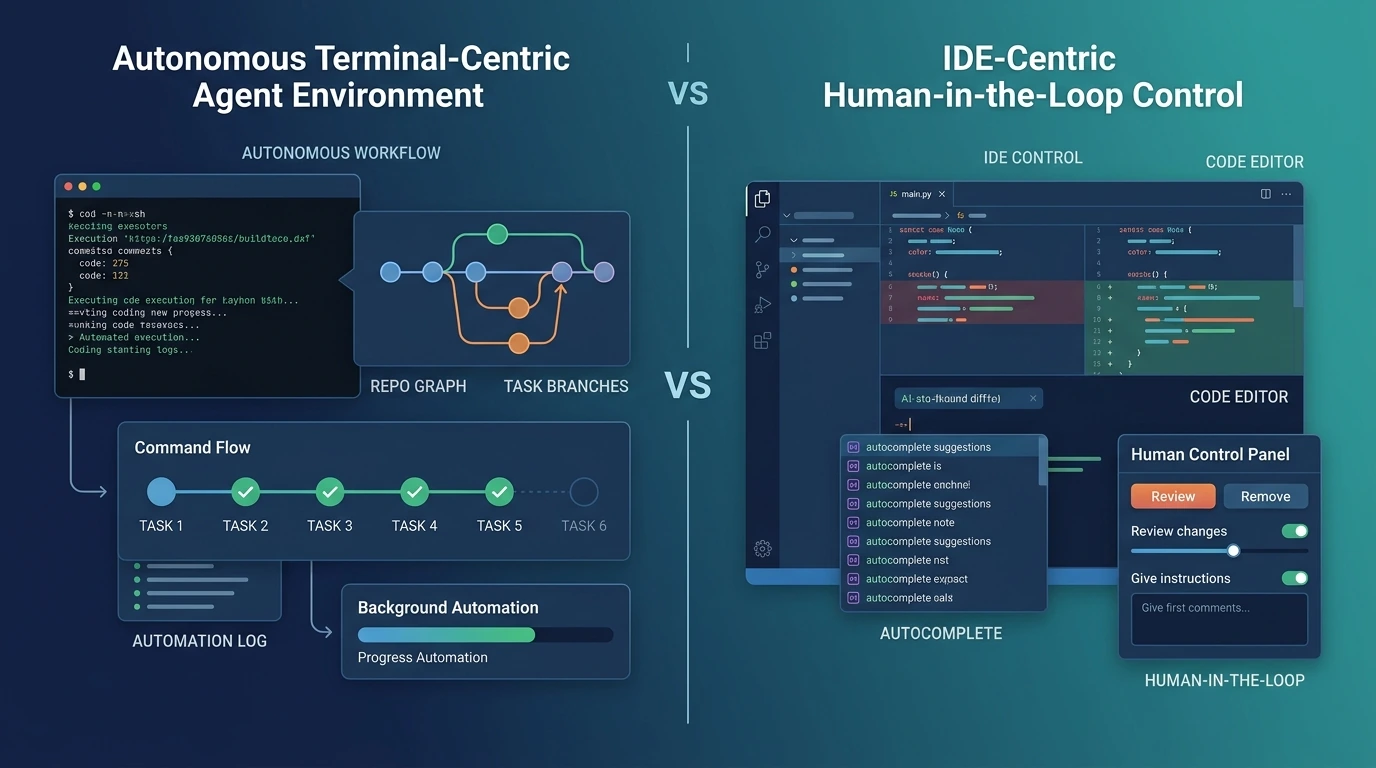

That distinction matters if you are building agents rather than just using one. A coding assistant can succeed as a product with good autocomplete, strong model quality, and a polished UI. An agent runtime needs something else: a durable execution loop, explicit tool surfaces, context management, permissions, extensibility, and a way to preserve project conventions across long-running work. The public record suggests that this is where Claude Code’s real architecture lives.

Testing criteria

For this article, I treated hands-on testing as a constrained architecture verification exercise rather than a benchmark study. I focused on five questions: what the runtime loop actually does, how context persists and compacts, how extension points are separated, where governance lives, and whether Anthropic’s broader product moves support the claim that Claude Code is really a harness plus product surface rather than a monolithic app.

What I tested

I could verify the SDK surface, configuration model, and architectural boundaries from Anthropic’s current public docs and engineering posts. I could not run a live authenticated agent session in this environment, so I am not making claims about production latency, pricing behavior under load, or end-to-end reliability in a real deployment. My testing here is best read as interface-level and workflow-level validation, not as a quantitative bake-off.

From that limited testing, what stood out most was how little of the system is explained by “the model” alone. The structure Anthropic documents is much closer to a layered runtime: the model reasons inside a loop, tools take action, hooks and permissions shape behavior, CLAUDE.md and settings inject durable context, and subagents, skills, plugins, and MCP expand the system without forcing everything into one giant prompt.

What the harness actually is

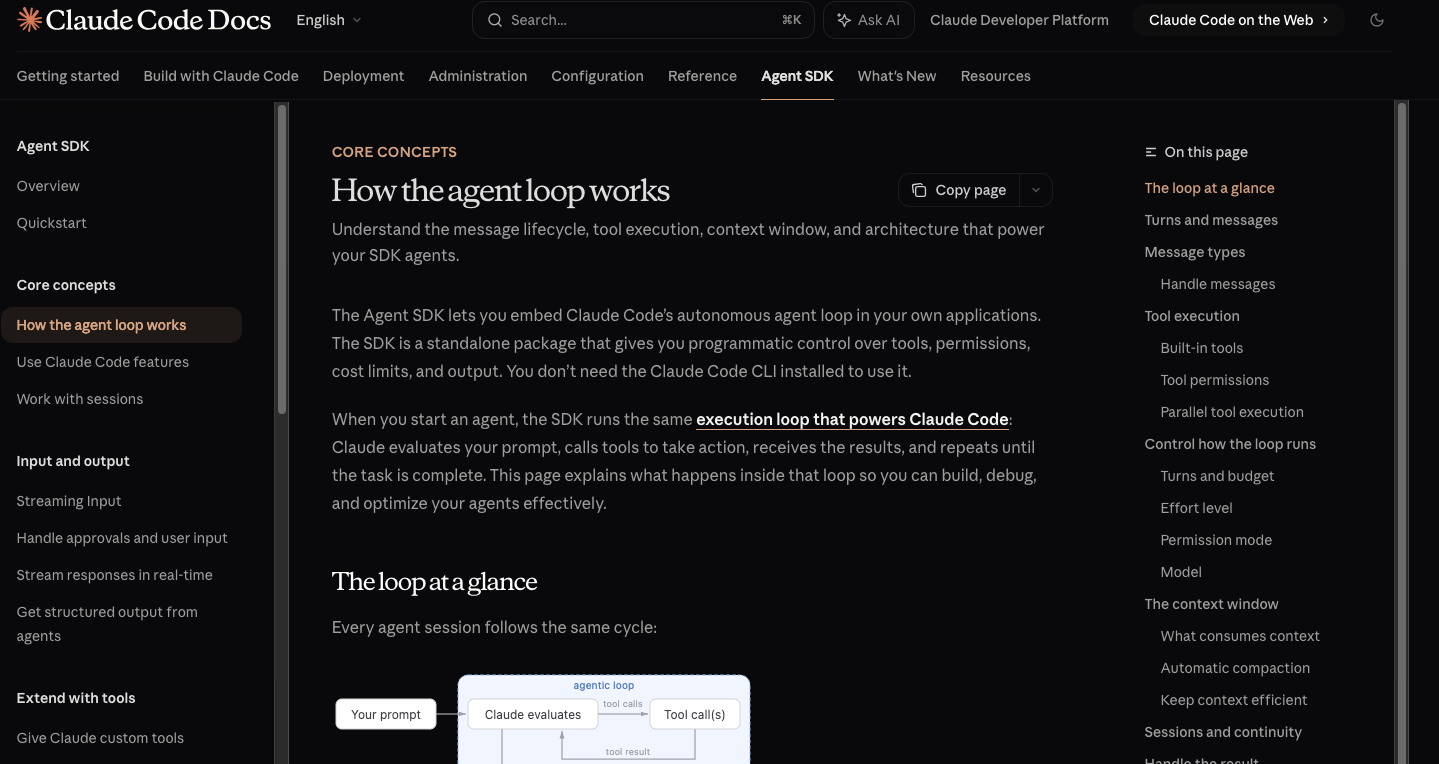

The clearest direct statement comes from Anthropic’s own documentation: the Agent SDK embeds the same autonomous agent loop that powers Claude Code, and it gives developers programmatic control over tools, permissions, cost limits, and output. That is already enough to reframe the product. Claude Code is not just a chat interface with shell access. It is one surface on top of a runtime that Anthropic now expects other applications to reuse.

Based on the public docs, the most practical way to understand that runtime is as six layers: execution, context, capability, orchestration, governance, and product surface. This is my synthesis, not Anthropic’s official diagram, but it is the cleanest way to reconcile the overview, agent loop, hooks, subagents, skills, plugins, MCP, and settings documentation into one architecture.

| Layer | What it does | Why it matters |

|---|---|---|

| Execution loop | Runs the prompt → tool call → result → repeat cycle | Makes the agent iterative rather than one-shot |

| Context layer | Carries system prompt, tool definitions, history, CLAUDE.md, skill descriptions | Keeps work coherent across turns |

| Capability layer | Exposes built-in tools, custom tools, and MCP integrations | Lets Claude act instead of only describe |

| Orchestration layer | Uses subagents and skills to divide work | Improves parallelism and reduces context pollution |

| Governance layer | Applies settings, permissions, approval modes, and hooks | Keeps the system controllable and safer |

| Product surface | Presents the runtime in Claude Code, the SDK, and now Xcode | Explains why the same harness can show up in different interfaces |

How the execution loop works

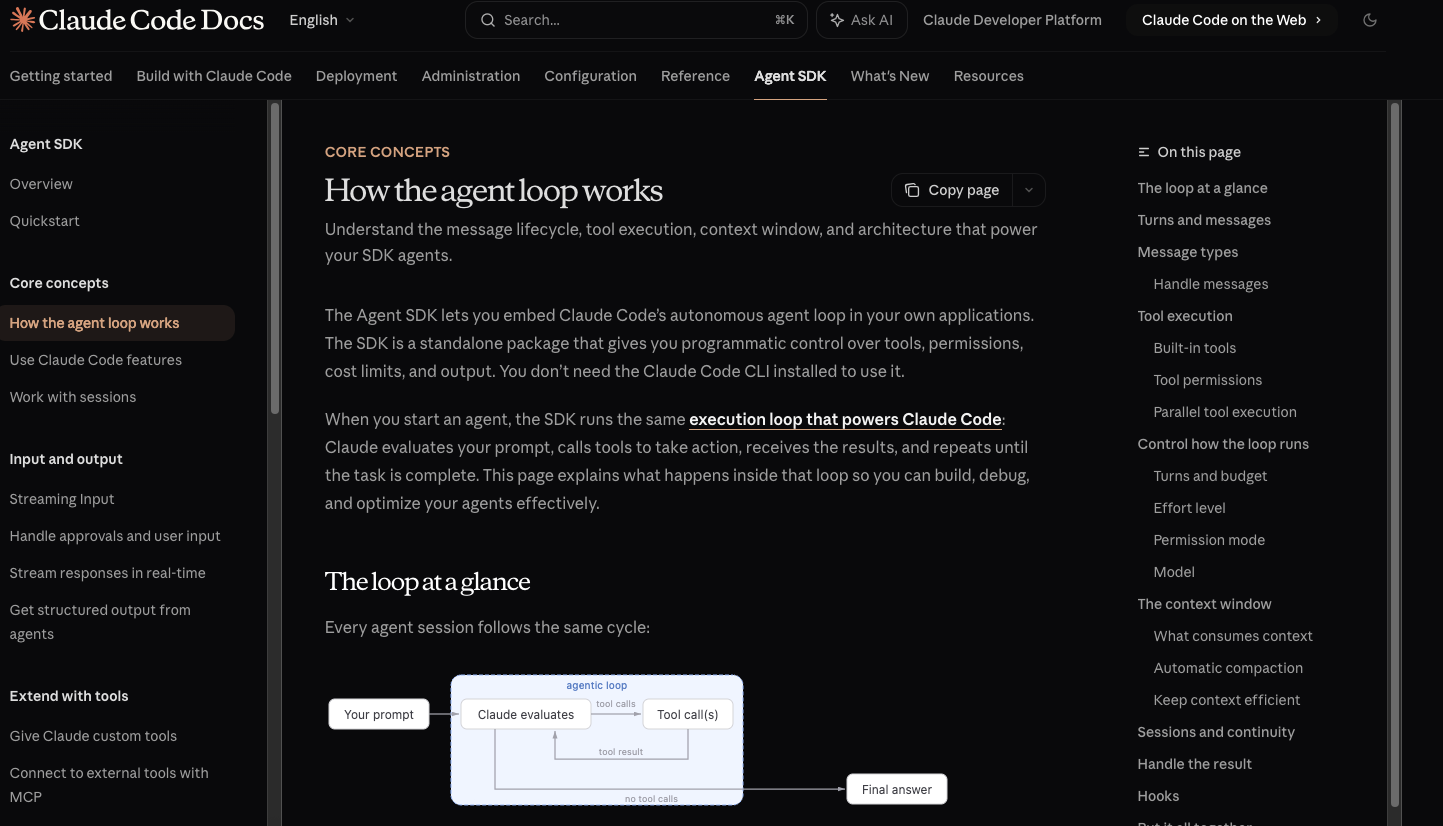

Anthropic’s agent loop page is unusually explicit. A session starts with a prompt plus the system prompt, tool definitions, and conversation history. Claude evaluates the state, emits text and optional tool calls, the SDK executes those tools, returns the results to Claude, and repeats until Claude produces a response with no more tool calls. The SDK then yields a final result message with output, token usage, cost, and session ID.

That is a different operating model from ordinary request-response tool calling. In a simpler agent setup, your application often has to orchestrate each turn manually. Here, the harness owns the loop. It decides when another turn is needed, when tools should run, when a result has converged, and when limits such as max turns or max budget should stop execution. Anthropic also exposes effort and approval modes as runtime options, which makes the loop configurable rather than hard-coded.

This is one reason the Xcode integration matters. Anthropic’s February 2026 announcement did not present Xcode support as a new chat panel. It described Xcode 26.3 as using the same underlying Claude Agent SDK harness, with support for autonomous work, background tasks, plugins, subagents, and even visual verification through Previews. That is exactly what a reusable runtime looks like when it graduates into another product surface.

Why context management is part of the architecture, not a side feature

The context model is one of the strongest clues that Claude Code was built as an agent system, not just a coding chatbot. Anthropic documents that context accumulates across turns and includes the system prompt, tool definitions, conversation history, tool inputs and outputs, CLAUDE.md content when setting sources are enabled, and skill descriptions. Anthropic also says prompt caching reduces repeated prefix cost for stable content such as the system prompt and CLAUDE.md.

Compaction is the more important design choice. When the context window approaches its limit, the SDK automatically summarizes older history and emits a compact-boundary event. Anthropic is also clear about the trade-off: compaction can lose specific instructions from early in the conversation, which is why persistent rules belong in CLAUDE.md rather than in a one-off opening prompt. That is a runtime discipline, not a stylistic preference.

This is also where the system-prompt story gets more nuanced than many third-party explainers suggest. The Agent SDK uses an empty system prompt by default, and developers can opt into the claude_code preset if they want Claude Code’s built-in tool instructions and behavior. Anthropic also notes that the preset does not automatically load CLAUDE.md; you still need to configure settingSources or setting_sources. In practice, that means behavior is distributed across several control surfaces rather than hidden in one magical prompt.

Tools are only one layer of the system

The built-in tool list is revealing. Anthropic groups tools into file operations, search, execution, web, discovery, and orchestration. The orchestration bucket includes Agent, Skill, AskUserQuestion, and TodoWrite, which is a strong signal that the runtime is not only about invoking external actions. It also has native concepts for delegating work, loading higher-level procedures, asking for clarification, and tracking tasks.

That design choice lines up with Anthropic’s engineering explanation of the SDK. In the September 2025 engineering post, Anthropic framed the broader principle as “giving your agents a computer,” but it also emphasized that tools should be the primary building blocks of execution, that bash remains useful as a flexible escape hatch, and that code generation can itself become an execution strategy for complex work such as file creation and repeatable logic.

MCP sits one layer above ordinary custom tools because it standardizes integration rather than just exposing another callable function. Anthropic’s Claude Code MCP docs describe MCP as the open standard that lets Claude connect to external tools, databases, and APIs, and the examples span issue trackers, monitoring systems, databases, design tools, Gmail, and more. That matters architecturally because it separates “what the agent can do” from “how every integration is individually implemented.”

The orchestration layer is the most underestimated part

Subagents are where the architecture starts to look less like a CLI and more like an operating model for long-running work. Anthropic’s subagents docs say each subagent has its own context window, can be configured with specific tools, and works independently before returning results. The engineering post adds the practical reason: subagents help with both parallelization and context management because they send relevant information back to the orchestrator rather than their full working state.

That is not a cosmetic feature. It changes how you design complex agents. A main agent can stay comparatively lean while specialized workers search, review, validate, or transform information in isolation. When Anthropic says the same harness now powers Xcode with subagents and background tasks, it is reinforcing that the harness is designed for delegated autonomous work, not just inline code editing.

Skills solve a different problem. Anthropic describes Agent Skills as discoverable folders containing SKILL.md plus optional scripts and resources, with the model deciding when to use them. The key idea is procedural packaging: instead of repeating the same prompt instructions every time, you bundle expertise into reusable capability modules. Anthropic’s engineering write-up on Agent Skills pushes the same point further, describing skills as a way to equip general-purpose agents with domain-specific expertise in a composable and portable form.

Hooks are more important than they first appear because they move part of the control plane out of the model’s discretion. Anthropic’s hooks docs define events such as PreToolUse, PostToolUse, UserPromptSubmit, Stop, SubagentStop, and PreCompact, and the agent loop docs note that hooks run in the application process rather than inside the agent’s context window. That means they do not just add automation. They create deterministic interception points for validation, auditing, policy enforcement, and session management.

Plugins then act as a distribution mechanism for many of these same extension types. Anthropic’s plugins docs say plugins can provide custom commands, agents, hooks, skills, and MCP servers. Taken together, skills package expertise, hooks package control, MCP packages integrations, and plugins package delivery. That is a much more mature extension story than “let the model call some tools.”

Governance is built into the runtime

One easy mistake is to think governance in Claude Code is an afterthought. Anthropic’s settings docs describe a hierarchical settings model with user settings, project settings, local project settings, and enterprise-managed policies, with more specific or higher-level layers overriding lower-level ones. That is configuration architecture, not UI decoration.

Permissions are part of that same story. Anthropic exposes approval behavior, allow rules, deny rules, and approval modes in the SDK and Claude Code surfaces, and the settings docs explicitly recommend deny rules for sensitive files such as environment files, secrets, and credentials. In other words, the runtime is designed on the assumption that agents act on real systems and therefore need bounded access.

Anthropic is also explicit about a boundary many explainers blur: Claude Code’s internal system prompt is not published on the docs site. The company recommends using CLAUDE.md or appended system prompt instructions to change behavior instead. That matters because it tells us what kind of analysis is credible. Public architecture work can explain the control surfaces Anthropic documents. It should not pretend to know undocumented internals.

So how should you think about the architecture?

The most practical conclusion is that Claude Code’s harness is not best understood as “Claude with some tools.” It is closer to a reusable agent runtime with explicit support for iterative execution, durable and compactable context, delegated workers, packaged expertise, deterministic interceptors, standardized integrations, and hierarchical policy. Claude Code is the best-known interface to that runtime today, but Anthropic’s own SDK and Xcode positioning make it clear that the runtime is the deeper product.

That does not make the system magical. The trade-offs are visible in the docs as well. Compaction can lose detail. More tools and integrations mean more governance work. Custom system prompts can replace useful built-in safety and environment behavior if you are careless. MCP output can get large enough to need explicit limits. The strength of the system is not that it removes architecture decisions. The strength is that it surfaces them.

For builders, that is the real value of this architecture breakdown. If you are only trying to ask Claude to edit files from a terminal, Claude Code already gives you a polished surface. If you are trying to build a long-running agent that needs control over execution, memory, extensions, and policy, the Claude Agent SDK is the layer to study because it exposes the harness itself.

FAQ

What is the relationship between Claude Code and the Claude Agent SDK?

Claude Code is a product surface, while the Claude Agent SDK exposes the underlying agent harness more directly for developers. Anthropic says the SDK runs the same autonomous loop that powers Claude Code, and it renamed the old Claude Code SDK to reflect the broader role of that harness.

Why is the agent loop more important than the UI?

The agent loop is where the system becomes autonomous rather than conversational. Anthropic documents a repeatable cycle of evaluation, tool execution, result ingestion, and continuation until completion, which is the core behavior you would reuse across products such as Claude Code and Xcode.

Why do subagents matter so much?

Subagents matter because they solve two hard problems at once: parallelization and context isolation. Anthropic’s docs say subagents have their own context windows and configurable tool access, and Anthropic’s engineering post explains that they return only relevant information back to the orchestrator instead of their full working state.

Are Skills just another name for prompts?

No. Anthropic describes Agent Skills as organized folders with a SKILL.md file and optional scripts or resources, and the model invokes them when relevant. That makes them closer to packaged procedural knowledge than to one-off prompting.

Do hooks live inside the model context?

No. Anthropic’s agent loop documentation says hooks run in the application process rather than inside the agent’s context window. That is why they are useful for deterministic checks, blocking dangerous actions, and auditing behavior without consuming model context.

Does the SDK automatically inherit Claude Code’s project memory?

Not by default. Anthropic says the Agent SDK uses an empty system prompt by default, and CLAUDE.md files are only loaded when you explicitly enable setting sources. Even the claude_code preset does not automatically load CLAUDE.md on its own.

Is Claude Code’s internal system prompt publicly documented?

No. Anthropic’s settings documentation states that it does not publish Claude Code’s internal system prompt on the docs site. The documented customization paths are CLAUDE.md, output styles, appended prompt instructions, or a custom system prompt in the SDK.

Claude Code vs Cursor in 2026: Which Workflow Fits?

Compare Claude Code and Cursor on autonomy, editing speed, background agents, automation, and pricing to choose the right AI coding workflow in 2026.

By Zoe 一 Apr 09, 2026- News

- Claude Code

Claude Capybara Explained: What’s Official

Claude Capybara appears tied to Anthropic’s unreleased Mythos effort. Here’s what is official, what came from leaks, and why it matters.

By Zoe 一 Apr 09, 2026- News

- Claude Code

Claude Mythos Coding and AI Dev Workflows

Claude Mythos is more than a benchmark jump. Its gated rollout shows how AI development teams should rethink model tiers, review, and security.

By Zoe 一 Apr 09, 2026- News

- Claude Code

- X

- Youtube

- Discord