HappyHorse-1.0 vs Seedance 2.0: Quality or Access?

If I strip away the hype, the comparison becomes easier to read. HappyHorse-1.0 is the stronger open-model story. Seedance 2.0 is the clearer product story. HappyHorse-1.0 is publicly framed as a 15B open-source video model with synchronized audio, 1080p output, multilingual lip-sync, and commercial-use rights. Seedance 2.0 is officially positioned by ByteDance as a unified multimodal audio-video model that supports text, image, audio, and video inputs and is already rolling out through CapCut in selected markets. You can see those two positions clearly on the HappyHorse project page and in ByteDance’s official Seedance 2.0 launch post .

That is why I would not reduce this to a simple “which model is better?” question. The more useful question is whether you care more about open-source upside or clearer near-term access. For builders, researchers, and teams that value self-hosting or future customization, HappyHorse-1.0 is the more strategically interesting project. For creators and content teams that need a clearer route into a real workflow, Seedance 2.0 currently has the cleaner public rollout story.

Quick verdict

My judgment is simple. HappyHorse-1.0 looks stronger as a market signal. Seedance 2.0 looks stronger as a current workflow signal. HappyHorse-1.0 is attracting attention because it promises open-source leverage, strong benchmark positioning, and infrastructure-level control. Seedance 2.0 matters because ByteDance has already attached it to a real product channel, with CapCut confirming a phased rollout for paid users in Brazil, Indonesia, Malaysia, Mexico, the Philippines, Thailand, and Vietnam. See HappyHorse’s benchmark-led positioning and CapCut’s Dreamina Seedance 2.0 newsroom announcement .

If your priority is understanding where open AI video could go next, HappyHorse-1.0 is the more interesting model to watch. If your priority is deciding what to evaluate inside a real creator stack today, Seedance 2.0 is the more practical place to start because the path to access is clearer, even if it is still limited.

At a glance

| Dimension | HappyHorse-1.0 | Seedance 2.0 |

|---|---|---|

| Ownership model | Open-source positioning | Proprietary ByteDance model |

| Public strength | Open weights story, audio+video, commercial-use framing | Official multimodal launch, reference-rich control, rollout via CapCut |

| Access story | Project-led web presence and open-model narrative | Official launch plus phased product rollout |

| Best fit right now | Builders, researchers, infra-minded teams | Creators and content teams seeking clearer evaluation paths |

| Main concern | Public evidence surface is fragmented | Rollout limits and IP-related scrutiny |

This table is more useful than a feature checklist because it maps the two models to consequences, not just claims. One side offers more long-term optionality. The other offers more immediate structure. Readers choosing between them are usually choosing between those two conditions, whether they phrase it that way or not.

Why HappyHorse-1.0 is getting so much attention

HappyHorse-1.0 is compelling for a reason. Its public materials describe a 15-billion-parameter unified Transformer that jointly generates video and synchronized audio from text or image prompts, supports multilingual lip-sync, includes commercial-use rights, and is designed for self-hosting and fine-tuning. That combination is unusually strong because it speaks to ownership as much as output quality. The core framing is laid out on the HappyHorse public project page .

From an editorial standpoint, that changes the category of the comparison. Once a model is framed around open-source access, deployment, and downstream control, it stops being just another video tool and starts looking like infrastructure. That is why HappyHorse-1.0 feels more important than a routine launch story. It is being presented as a platform layer, not only as a front-end experience.

This is also where I need to stay disciplined about E-E-A-T. The strongest HappyHorse-1.0 claims still live mainly in project-led or closely aligned public pages. That does not make them false. It does mean they should be treated as project claims first, market consensus second until more independent validation appears around access, performance, and broader adoption.

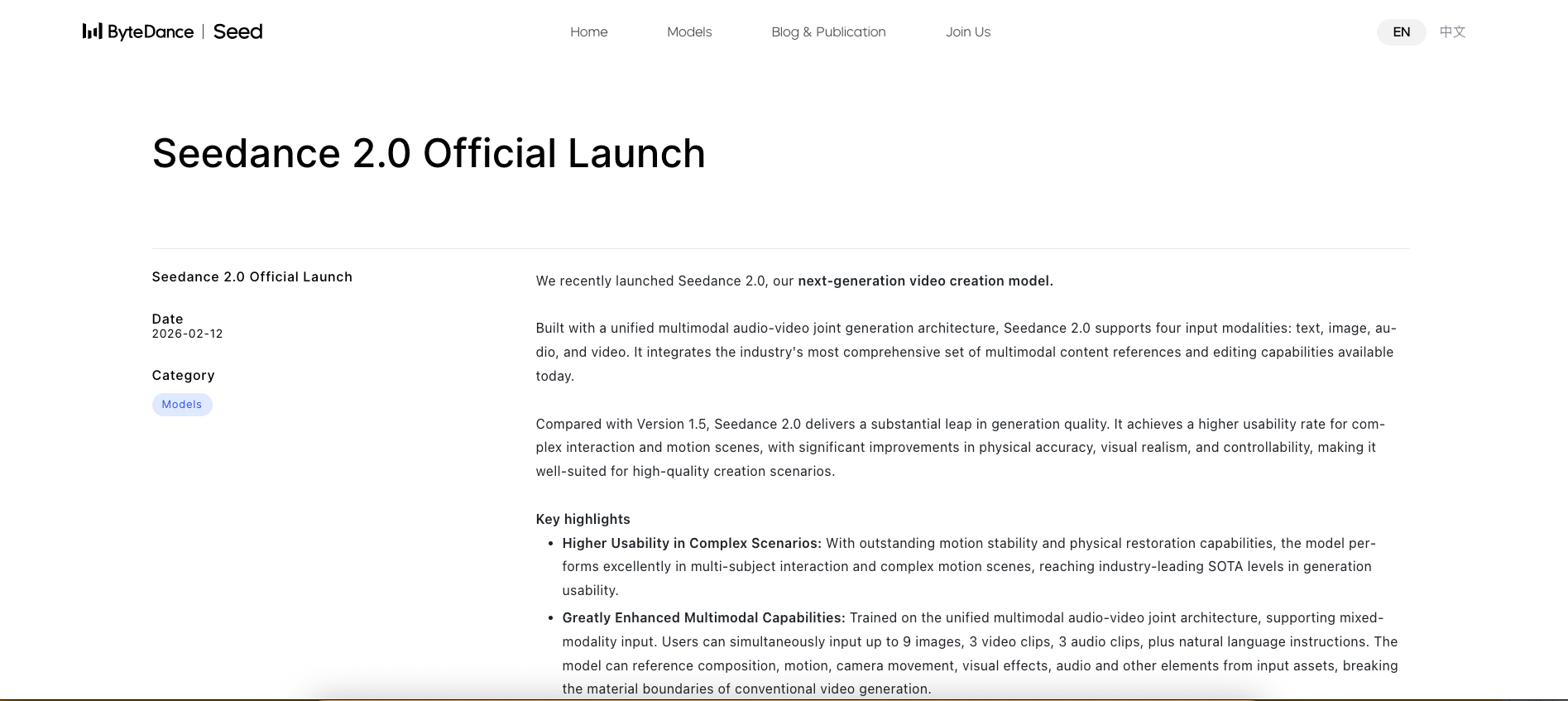

Why Seedance 2.0 still has the clearer product story

Seedance 2.0 is easier to evaluate because ByteDance has published a more coherent official surface. The company’s launch materials say the model supports four input modalities — text, image, audio, and video — and can take combined multimodal references. The same materials position it around higher controllability, video extension, editing, and 15-second multi-shot audio-video output. See the official launch post .

That official surface becomes more meaningful once distribution enters the conversation. CapCut has confirmed that Dreamina Seedance 2.0 is rolling out to paid users in selected countries, and TechCrunch reported that this limited release followed an earlier pause in broader global launch plans while ByteDance worked through intellectual property concerns. That means Seedance 2.0 is not frictionless, but it is clearly moving through a productization path that readers can understand. See CapCut’s newsroom page and TechCrunch’s rollout report .

That matters more than many comparison pages admit. For content teams, distribution is part of product quality. A model with slightly less upside can still be the better operational choice if access, workflow context, and product framing are significantly clearer.

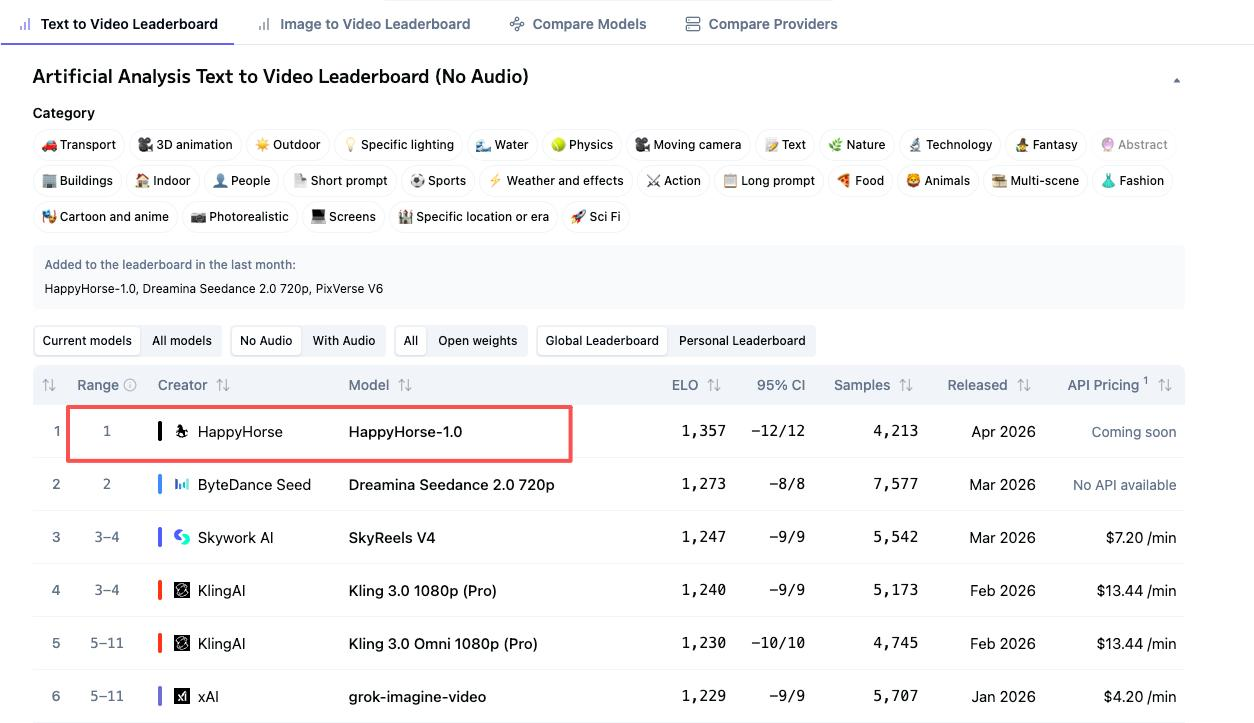

A leaderboard win is not the same as a production win

This is the section most comparison pages still avoid. HappyHorse-1.0 is being promoted with strong ranking language, including “#1” framing and Elo-style benchmark claims on public-facing pages. That matters because it signals the model deserves attention. It does not settle the adoption question. See HappyHorse’s ranking page .

In practical terms, benchmark strength and workflow readiness are different evaluations. A benchmark can tell me a model is strong under certain conditions. It cannot tell me how stable access is, how coherent the documentation is, how quickly a team can operationalize it, or how much uncertainty sits between impressive output and repeatable production. Those questions sit outside the leaderboard, but they often determine the actual winner in a working environment.

That is why this comparison becomes useful only when those two layers are separated. HappyHorse-1.0 may be the more exciting performance story. Seedance 2.0 still has the stronger documented path into a real creative environment. Treating those as the same thing would flatten the most important distinction between the models.

Where Seedance 2.0 still has the edge

Seedance 2.0 remains stronger in three places that matter to real teams: official proof, workflow framing, and ecosystem context. Official proof matters because ByteDance has published a coherent launch story. Workflow framing matters because the company is emphasizing multimodal references, editing, extension, and controllability rather than only showpiece output. Ecosystem context matters because CapCut and Dreamina give readers a credible picture of where the model is intended to live.

If I were advising a short-form content team or a creative operations group, those three signals would be hard to ignore. They do not prove that Seedance 2.0 is always the better model. They do suggest it is the easier one to assess in the context of an actual workflow.

Where HappyHorse-1.0 could be the better bet

HappyHorse-1.0 becomes more attractive when the goal shifts from immediate access to strategic leverage. Teams that care about self-hosting, future fine-tuning, deployment flexibility, and control over the stack will naturally see more value in an open-model trajectory than in a stronger closed-platform rollout. The public materials around HappyHorse-1.0 are designed to appeal precisely to that audience.

That does not mean every reader should prefer it today. It means the model may matter more over time than it does in the present moment. For infrastructure-minded readers, that distinction is often the whole reason to pay attention.

What each model still gets wrong

HappyHorse-1.0’s biggest weakness is evidentiary rather than conceptual. The story is strong, but too much of that story still lives inside project-led or closely aligned public pages. That raises the burden of verification for anyone trying to separate robust signals from aggressive positioning.

Seedance 2.0 has the opposite problem. Its proof chain is more consolidated, but its rollout remains limited and its launch story has already been shaped by intellectual property scrutiny. TechCrunch reported that ByteDance paused broader global launch plans while addressing those concerns before later expanding through a narrower CapCut rollout. That means Seedance 2.0 is easier to understand than HappyHorse-1.0, but not automatically easier to deploy across every market or policy environment. See TechCrunch’s report on the paused global launch .

Which model makes more sense for creators?

For creators and content teams, my answer is conditional. Choose Seedance 2.0 if you need a clearer evaluation path inside a known creation environment. Choose HappyHorse-1.0 if you care more about long-term open-model leverage than near-term product certainty. That is the split I would use to guide the decision.

If your workflow depends on quick testing inside a recognizable product channel, Seedance 2.0 currently has the cleaner public route. If your workflow depends on future control, infrastructure flexibility, or a stronger open-source upside story, HappyHorse-1.0 is the more interesting bet. The right choice depends less on abstract model prestige than on how your team intends to use the model over the next six to twelve months.

Final recommendation

I would not name a one-word winner here because that would misread the market. HappyHorse-1.0 looks stronger as a strategic signal. Seedance 2.0 looks stronger as a current workflow option. One invites you to think about what an open AI video stack could become. The other gives you a more concrete picture of how a major platform is trying to operationalize multimodal video creation now.

For readers who want the shortest possible answer, here it is: choose HappyHorse-1.0 if you are evaluating future leverage; choose Seedance 2.0 if you are evaluating present access. That is the most honest decision framework I can offer on the current public evidence.

FAQ

Is HappyHorse-1.0 actually available yet?

Yes, but its public availability story is still less consolidated than Seedance 2.0’s. HappyHorse-1.0 has a visible public project presence with model-level claims, demos, and open-source positioning, but much of the strongest narrative still lives in project-led or closely aligned pages rather than a broader official ecosystem rollout. That makes it visible and important, but still noisier to evaluate. See the HappyHorse public project page .

Is Seedance 2.0 easier to evaluate right now?

Yes. Seedance 2.0 is easier to evaluate right now because its official documentation and rollout path are clearer. ByteDance has an official launch post, and CapCut has confirmed a phased rollout for paid users in selected markets. That does not make the model globally frictionless, but it does make the current public story easier to follow. See ByteDance’s launch post and CapCut’s rollout page .

Does a higher-ranked model automatically make more sense for production?

No. A higher-ranked model does not automatically make more sense for production. Benchmark or leaderboard strength can signal quality, but production choices also depend on access, documentation, integration maturity, workflow friction, and governance risk. That is exactly why HappyHorse-1.0 and Seedance 2.0 should not be judged on benchmark language alone. See HappyHorse’s ranking page .

Which one makes more sense for creators?

Seedance 2.0 makes more sense for creators who prioritize a clearer route into a current workflow, while HappyHorse-1.0 makes more sense for readers who care about open-model upside and long-term control. The correct choice depends on whether your team values immediate evaluation inside a product ecosystem or greater long-term flexibility at the model layer.

What Is HappyHorse-1.0? The Mystery AI Video Model Overtaking Seedance 2.0

Apr 09, 2026

Best Sora Alternative in 2026: Why Dreamface Is the Better Choice

Mar 25, 2026

DreamClaw Agent: The All-in-One AI Automation Engine for DreamFace

Mar 04, 2026.jpg)

Seedance 2.0 Prompt Guide: The Ultimate Template for Cinematic AI Videos

Mar 03, 2026

- X

- Youtube

- Discord