What Is HappyHorse-1.0? The Mystery AI Video Model Overtaking Seedance 2.0

- AI Video Generator

- HappyHorse

- AI Video

Just when it seemed like Seedance 2.0 was about to dominate the AI video market, a completely unexpected challenger appeared and changed the narrative overnight.

A mysterious video generation model called HappyHorse-1.0 has suddenly become one of the hottest topics in AI. It arrived almost out of nowhere, quickly climbed to the top of public benchmark discussions, and immediately sparked debate across the AI community. For many people watching the space, the most surprising part is not just that HappyHorse-1.0 appeared, but that it appeared with the kind of performance gap that feels unusually large.

That is why everyone is asking the same question: what exactly is HappyHorse-1.0?

What Is HappyHorse-1.0?

Right now, HappyHorse-1.0 is best understood as a mystery AI video model that has rapidly gained attention for its strong text-to-video performance. It is anonymous, it does not yet have the kind of mainstream product presence that other major models have, and it is still surrounded by speculation. But despite that lack of official clarity, it has already managed to become one of the most talked-about names in AI video.

What makes the hype even bigger is the timing. Seedance 2.0 had already built a strong reputation for motion consistency, multi-shot generation, and polished visual storytelling. Many people assumed it would continue to lead the next phase of AI video. Instead, HappyHorse-1.0 suddenly entered the conversation and disrupted that expectation almost immediately.

Why Is HappyHorse-1.0 Getting So Much Attention?

The excitement around HappyHorse-1.0 is not just about leaderboard drama. It is also about the kinds of prompts people have been testing with it. The examples circulating online are highly specific and difficult for video models to handle well. These are not simple beauty-shot prompts. They test physical logic, temporal consistency, object interaction, scene continuity, and motion control. In other words, they test the exact capabilities that separate average video models from great ones.

Here are five of the prompt examples that have helped fuel the discussion around HappyHorse-1.0:

A hula hoop spinning on a kid's waist, gradually climbing to their chest, then dropping to knees, then clattering to the floor. They pick it up to try again.

A cat staring at its own reflection in a toaster, paw tapping the chrome surface. The distorted cat reflection taps back. Audio: Paw taps, confused meow.

A barista creating latte art by pouring steamed milk into espresso. The white milk submerges beneath the brown crema initially, then breaks through the surface as the cup fills. The barista's wrist makes precise oscillating movements, creating a rosetta pattern. The milk and espresso maintain their distinct colors while interacting at the boundary. Audio: The gentle pour of liquid, the hiss of the steam wand in the background.

A flower blooming and wilting over two weeks, one photo per day. Same vase, same window, same angle. Light changes with weather. Audio: Quiet domestic.

A rubber band ball bouncing down a staircase, each impact unpredictable, the ball taking a hard left into a bathroom, ricocheting off tile, finally resting in a toilet. Nobody retrieves it.

What makes these prompts so effective is that they push beyond aesthetic quality. They challenge a model’s ability to understand timing, physical interaction, continuity, motion precision, and cause-and-effect behavior. If a model performs well on prompts like these, people immediately start to see it as a serious contender rather than just another incremental release.

That is why HappyHorse-1.0 has drawn so much attention so quickly.

Who Is Behind HappyHorse-1.0?

At the same time, the mystery around the model is only making the buzz stronger. Nobody seems entirely sure which company or team is behind HappyHorse-1.0. Some people believe it could come from a major Chinese tech company working quietly behind the scenes. Others think it may be tied to a newer research group or a startup that has not yet formally introduced itself. There are also guesses that it could be linked to a team with a strong background in multimodal research that simply chose to stay anonymous for now.

That uncertainty has become part of the model’s appeal. In a market where major releases are usually accompanied by polished launch campaigns, partner announcements, and immediate API positioning, HappyHorse-1.0 feels different. It has the energy of a model that earned attention through output quality first and branding second.

Benchmark Hype vs. Real-World Use

Of course, benchmark excitement and real-world usefulness are not always the same thing.

That is the key point creators, marketers, and product teams should keep in mind. A model can dominate conversation online and still be difficult to access, hard to integrate, or unavailable for production use. For most users, the best AI video model is not just the one that wins the most arguments on social media. It is the one they can actually use to make reliable, high-quality content today.

That is where platforms matter.

Even if HappyHorse-1.0 is currently the most intriguing model in the market, creators still need practical tools that are fast, accessible, and strong enough for real workflows. This is exactly why Dreamface deserves attention in the current AI video landscape.

Why Dreamface Still Matters

Dreamface is already a strong platform for creators who want access to high-quality video generation models without waiting for the market to fully settle. Instead of forcing users to bet everything on one mystery model, it gives them practical options they can use right now.

There is also a good chance that Dreamface could move quickly if HappyHorse-1.0 becomes more broadly available. While there is no official confirmation yet, Dreamface may well support HappyHorse in the near future if public access opens up. For creators, that makes Dreamface worth watching not only for what it offers today, but also for what it may add next.

Veo and Seedance Are Still Strong Choices on Dreamface

In the meantime, two of the strongest reasons to use Dreamface are already clear: Veo and Seedance.

If you want premium visual quality and a more cinematic generation style, Veo is one of the most compelling models on Dreamface. It is a strong choice for creators who care about polished output, more film-like motion, and a high-end feel in their generated videos.

If you want strong coherence, cleaner action continuity, and reliable multi-shot storytelling, Seedance remains one of the most useful models available. Even with all the recent attention around HappyHorse-1.0, Seedance is still a powerful option for creators who want results they can actually build around.

Final Thoughts

That is really the bigger lesson here. The AI video market is moving so fast that leadership can change almost overnight. A new model can appear from nowhere and instantly reshape the conversation. But for creators, the smartest move is not just to chase every new headline. It is to use a platform that gives them both strong current models and room to benefit from what comes next.

HappyHorse-1.0 may be the mystery model everyone is talking about today. But Dreamface is still one of the best places to create AI video right now, especially if you want access to proven options like Veo and Seedance while staying ready for whatever the next breakout model turns out to be.

Best Sora Alternative in 2026: Why Dreamface Is the Better Choice

When Sora first appeared, it felt like one of those rare AI moments that genuinely stopped people in their tracks. At the end of 2024, OpenAI’s video product exploded across social media. People were generating cinematic clips, inserting themselves into movie-like scenes, and sharing AI-made videos that looked far beyond what most users thought was possible.

By Grim 一 Apr 09, 2026- Sora 2

- AI Video

- AI Video Generator

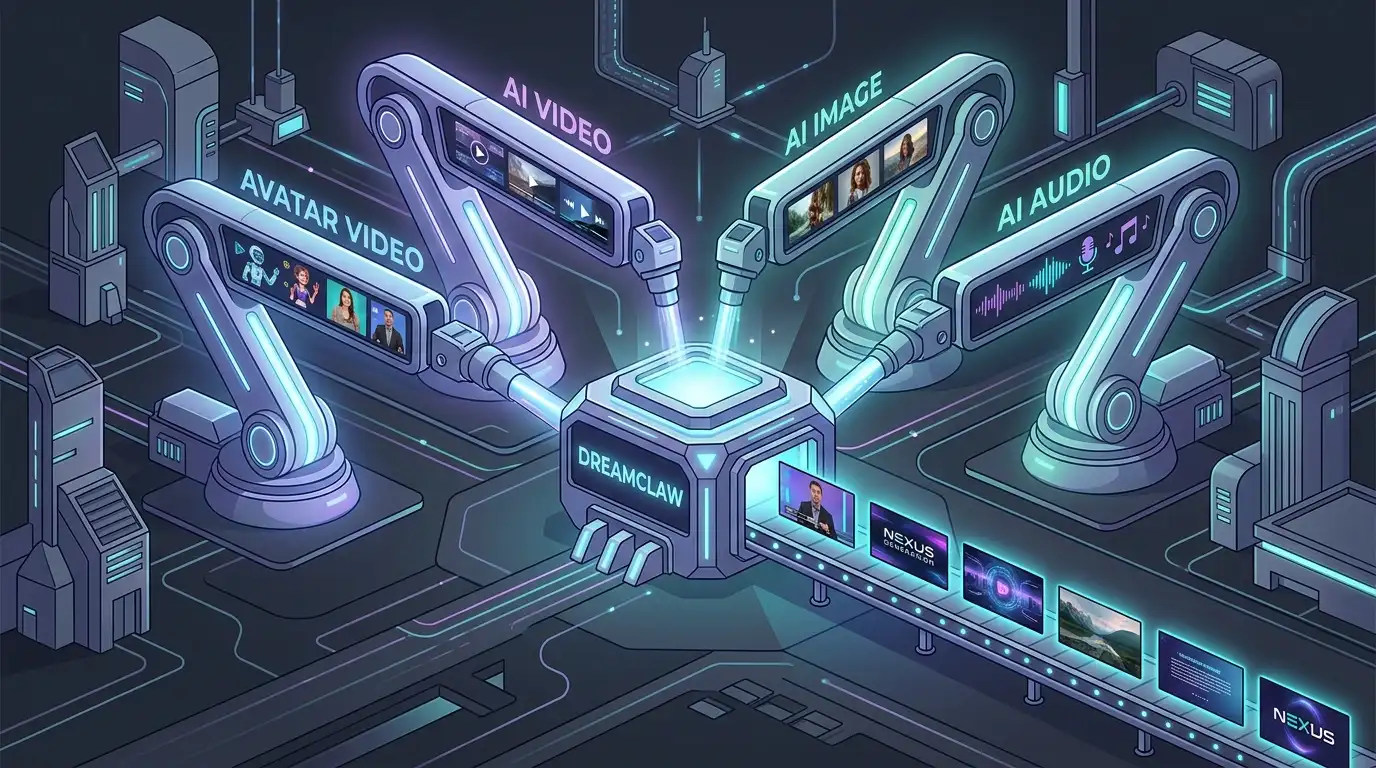

DreamClaw Agent: The All-in-One AI Automation Engine for DreamFace

DreamClaw isn’t just a new button on your dashboard. It is an intelligent AI Orchestrator—a digital director that lives within the DreamFace ecosystem. We are moving from a world where you use tools to a world where you collaborate with an agent. You provide the vision in plain English (or any language); DreamClaw handles the complex execution.

By Grim 一 Apr 09, 2026- AI Video Generator

- AI Image Generator

- DreamClaw

.jpg)

Seedance 2.0 Prompt Guide: The Ultimate Template for Cinematic AI Videos

Stop generating "slideshow" videos. This guide provides a creator-first framework for Seedance 2.0, focusing on the two pillars of high-quality AI video: logical structure and cinematic camera movement. Learn how to move beyond basic descriptions by using multi-shot templates, emotional modifiers, and stability constraints to create professional, immersive content. Stop asking if your prompt is detailed—start asking if your video is worth watching.

By Grim 一 Apr 09, 2026- AI Video

- Text-to-Video

- seedance 2.0

- Image-to-Video

- X

- Youtube

- Discord