HappyHorse vs Kling 3.0 vs SkyReels V4: Which Video Model Fits Builders?

- AI Video

- AI Video Generator

- HappyHorse

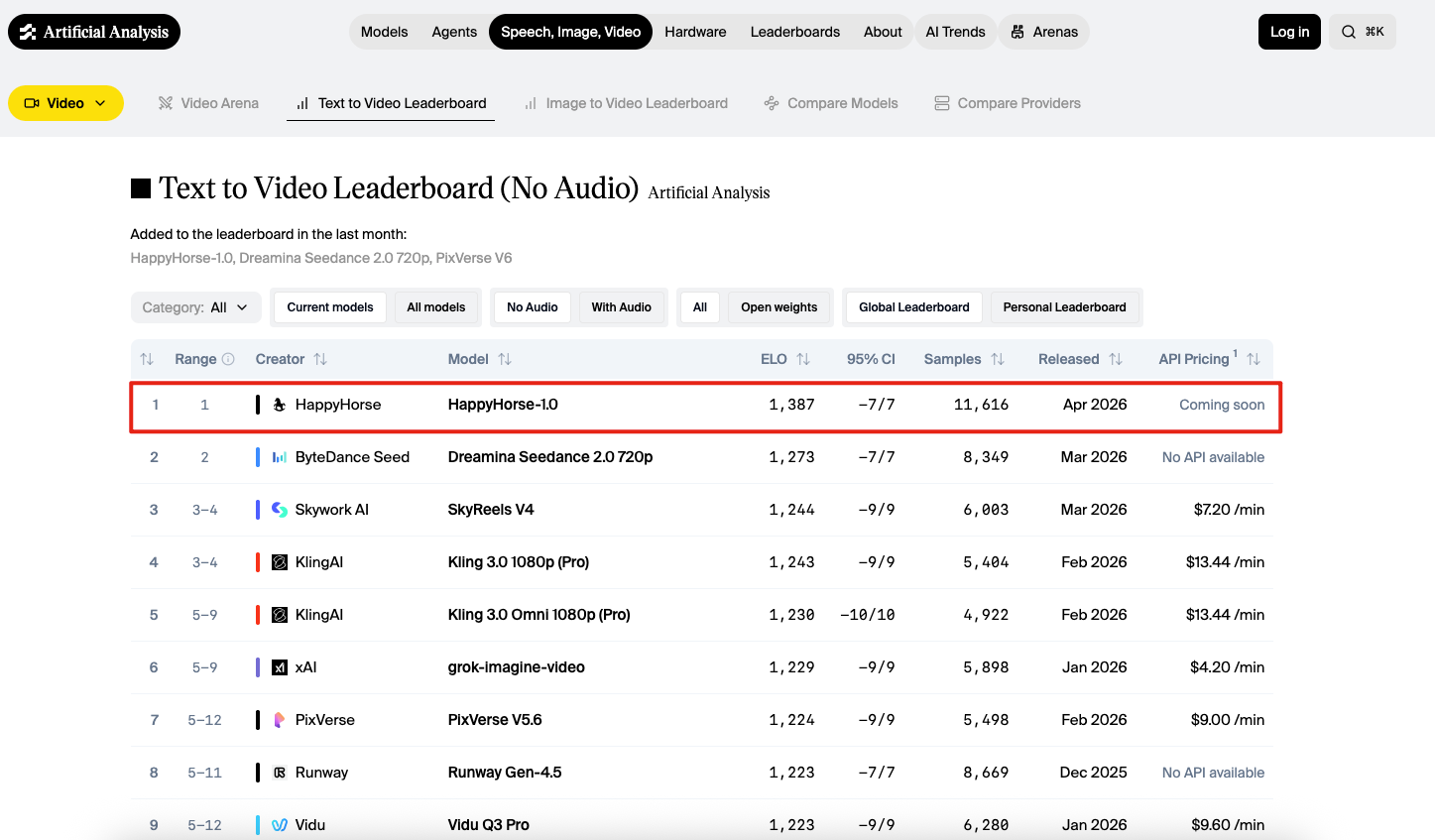

As of April 9, 2026, the public record points to three different winners depending on what a builder actually needs. HappyHorse-1.0 looks like the current leaderboard breakout in no-audio blind preference tests. Kling 3.0 looks like the clearest productized option, with official documentation for native audio, multi-shot control, reference consistency, and pricing. SkyReels V4 looks like the strongest publicly described unified multimodal architecture, with joint video-audio generation, editing, and inpainting under one system.

That is why this comparison matters for builders more than for casual prompt hobbyists. The most important distinction is not just output quality. It is the gap between leaderboard wins, public evidence quality, and production readiness.

| Dimension | HappyHorse-1.0 | Kling 3.0 family | SkyReels V4 |

|---|---|---|---|

| Best current public signal | #1 on Artificial Analysis no-audio text-to-video: Elo 1,386; #1 on no-audio image-to-video: Elo 1,412 | Official launch plus documented product controls, native audio, and pricing | Paper-backed unified multimodal architecture plus strong leaderboard presence |

| Current no-audio T2V standing | #1, released Apr 2026, pricing listed as “Coming soon” | Kling 3.0 1080p (Pro) #4 at Elo 1,242; Kling 3.0 Omni 1080p (Pro) #5 at Elo 1,230 | #3 at Elo 1,244, API pricing listed as $7.20/min |

| Current no-audio I2V standing | #1 at Elo 1,412 | Best family showing is Kling 3.0 Omni 1080p (Pro) #5 at Elo 1,298; Kling 3.0 1080p (Pro) is #13 at Elo 1,277 | #7 at Elo 1,296 |

| Audio story | Public documentation is still thin; the model-family page on Artificial Analysis still says “More details coming soon” | Native audio across multiple languages, dialects, accents, and multi-character dialogue is documented by Kuaishou | Joint video-audio generation is central to the model design, with audio references and synchronized output in the paper summary |

| Control story | Public evidence is strongest on outputs, weaker on documented controls and workflow surface | Multi-shot, custom storyboarding, reference video, multiple image references, and element consistency are all explicitly described | Text, image, video, mask, and audio references are supported in a unified generation/editing setup |

| Biggest builder risk | Unclear access, incomplete public documentation, and lower governance confidence today | Higher cost and a more closed product boundary than some alternatives | Strong architecture does not automatically mean the smoothest turnkey product path |

What each model is, and why this is not a simple “best model” question

HappyHorse-1.0 is the most disruptive name in this comparison because the blind-preference results are hard to ignore. Artificial Analysis currently shows it at the top of both the no-audio text-to-video and no-audio image-to-video leaderboards, and lists it as a model added in the last month. That makes it the most important frontier-quality signal in this group.

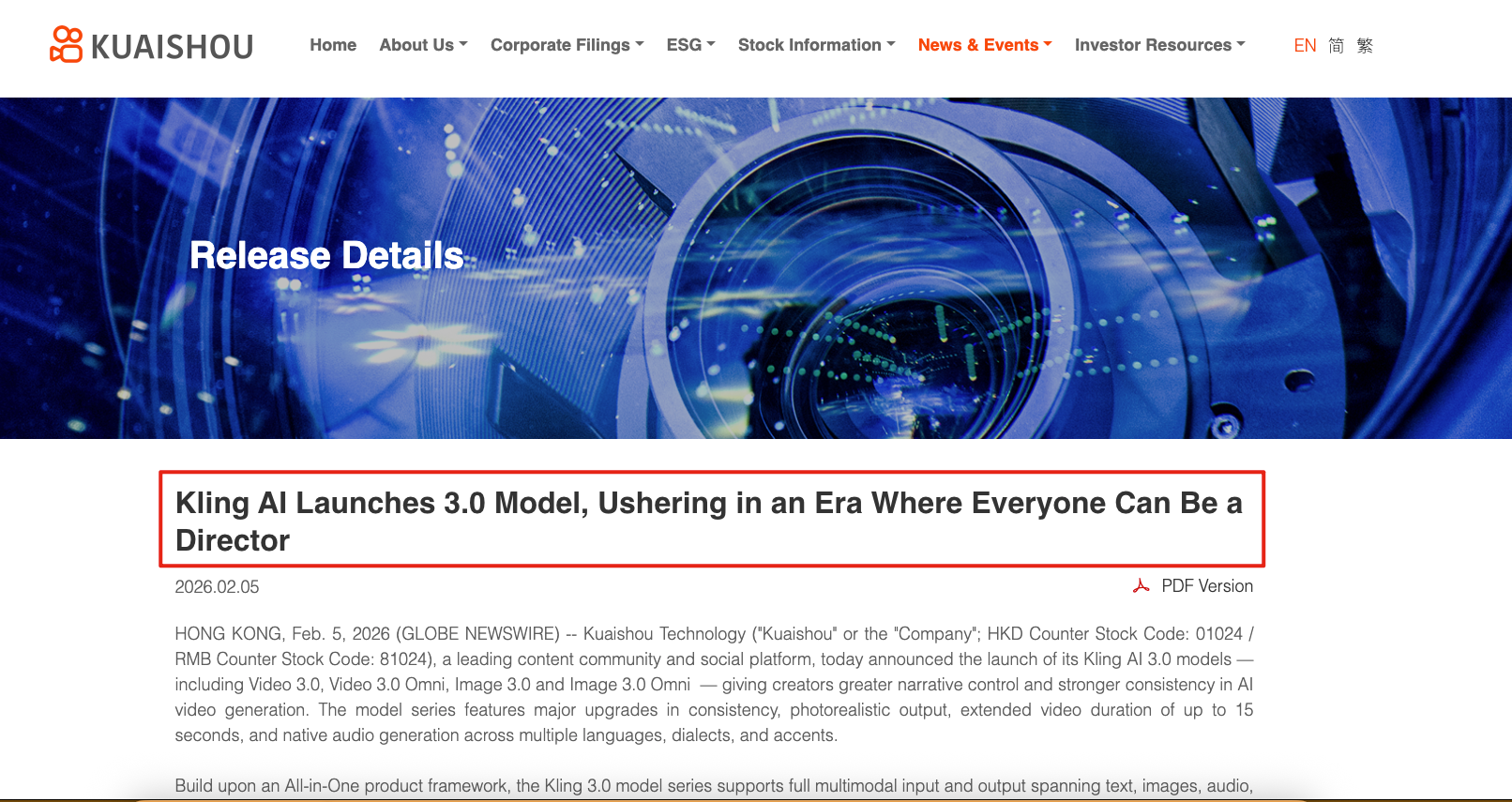

Kling 3.0 is different. Kuaishou’s launch announcement does not frame it as a mystery breakthrough. It frames it as a product family: Video 3.0, Video 3.0 Omni, Image 3.0, and Image 3.0 Omni, with stronger consistency, up to 15-second duration, native audio, reference-based generation, and shot-level control. That matters because builders do not just adopt outputs. They adopt systems with documented surfaces.

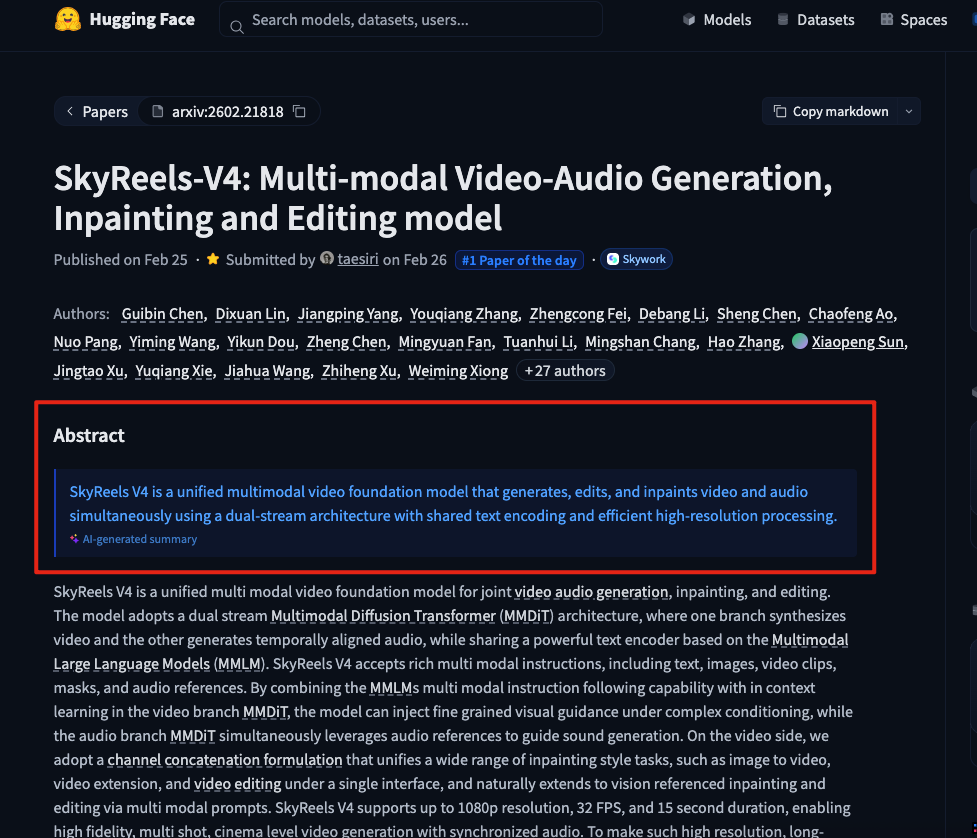

SkyReels V4 sits between those two positions. It does not have the same “anonymous champion” narrative as HappyHorse, and it does not present itself first as a mass product the way Kling does. What it does have is a clearer architectural thesis in the public record: a unified multimodal video foundation model that jointly handles video and audio generation, editing, and inpainting, using a dual-stream MMDiT setup with rich multimodal conditioning.

Why HappyHorse-1.0 is getting so much attention

The simplest answer is that HappyHorse-1.0 currently looks like the strongest no-audio output model in public blind preference testing. On the current Artificial Analysis text-to-video leaderboard, it sits at Elo 1,386. On the current image-to-video leaderboard, it sits at Elo 1,412. If your only question is “which model do users seem to prefer most often in blind no-audio comparisons right now,” HappyHorse has the cleanest answer.

The more practical answer is harder. Artificial Analysis currently lists HappyHorse pricing as “Coming soon,” and the model-family page still says “More details coming soon” rather than providing a full reveal, benchmarks, and analysis page comparable to more established model entries. That means the strongest public evidence for HappyHorse today is output preference, not workflow documentation.

For builders, that distinction is decisive. A model can be the most exciting result on a leaderboard and still be the wrong default production choice if access, deployment, governance, or reproducibility are not yet public enough. Based on the available evidence, that is the right way to read HappyHorse-1.0 today.

Which model gives builders the best real-world control?

On public evidence, Kling 3.0 has the clearest control story. Kuaishou’s official materials describe multi-shot narratives, custom multi-shot storyboarding, reference videos, multiple image references, stronger element consistency, and shot-level direction. The company also describes reference-based character and voice workflows inside the 3.0 family, which makes Kling easier to reason about as an actual production tool rather than as a pure output engine.

SkyReels V4 has the most technically ambitious control story. The paper summary says it accepts text, images, video clips, masks, and audio references, and unifies generation, inpainting, and editing under a single interface. That is a stronger public description of multimodal conditioning breadth than most commercial release pages offer, and it makes SkyReels especially interesting for builders who care about structured conditioning rather than just prompt quality.

HappyHorse-1.0 is harder to place here, not because it necessarily lacks control, but because the public record still does not document its control surface with the same clarity. That matters. When the documentation gap is large, builders should resist the temptation to infer too much from the output rankings alone.

Which model is better for audio-first workflows?

For audio-first work, Kling 3.0 and SkyReels V4 are easier to recommend than HappyHorse on public evidence. Kuaishou explicitly says Kling 3.0 supports native audio across multiple languages, dialects, and accents, including multi-character dialogue with user control over content, delivery, and speaking order. The user guide also describes speaker referencing and multilingual generation in more operational detail.

SkyReels V4 also has a strong audio-first case, but for a different reason. Its public technical description treats joint video-audio generation as a first-class architectural principle rather than as a bolt-on feature, and explicitly includes audio references as part of the conditioning stack. The summary also says the model supports up to 1080p, 32 FPS, and 15 seconds with synchronized audio.

HappyHorse may eventually prove competitive in audio-first workflows, but that is not the safest conclusion to publish today. The strongest current public evidence for HappyHorse remains its no-audio leaderboard performance, while its public documentation remains incomplete.

Where each workflow still breaks down

HappyHorse-1.0 breaks down at the point where a team needs confidence rather than curiosity. If a team needs stable access, documented pricing, governance clarity, and a public explanation of how the model fits into a repeatable pipeline, the current public record is too thin to make HappyHorse the default choice.

Kling 3.0 breaks down less on capability than on cost and product boundary. Artificial Analysis currently lists Kling 3.0 1080p (Pro) at $13.44 per minute on its leaderboard pages, while Kuaishou’s user guide presents a credits-per-second structure with higher rates for 1080p native audio than for silent modes. That is not disqualifying, but it means Kling’s clearer product path comes with a more explicit commercial trade-off.

SkyReels V4 breaks down in a subtler way. Its public architecture is compelling, and Artificial Analysis currently lists it as a priced, accessible model on both leaderboards. Even so, a strong paper plus accessible pricing is not always the same thing as the smoothest end-user product experience. Builders should treat SkyReels V4 as a serious option, but still separate architectural strength from product maturity.

Who should choose HappyHorse, Kling 3.0, or SkyReels V4?

Choose HappyHorse-1.0 if your job is to scout the frontier and monitor where blind user preference is moving. It is the model that most clearly forces the market to pay attention right now, and it may become even more important if its access story catches up with its output story.

Choose Kling 3.0 if you need the clearest productized path today. Its official documentation gives you enough visibility into native audio, multi-shot control, references, consistency, and pricing to make it a more defensible operational choice for content teams, ad workflows, and structured short-form production.

Choose SkyReels V4 if your workflow depends on rich conditioning and unified multimodal behavior. If text, images, masks, video clips, and audio references all matter to your stack, SkyReels V4 has the most persuasive public story about handling those demands in one architecture.

Wait before making HappyHorse-1.0 your production default if your team has strict requirements around compliance, procurement confidence, repeatability, or clearly documented access. That is not a knock on output quality. It is a reminder that builders ship systems, not just clips.

Is the higher-ranked model actually the better production choice?

Not necessarily.

The stronger practical conclusion is that leaderboard strength should be treated as one signal, not the whole decision. HappyHorse-1.0 currently owns the biggest quality signal in public no-audio blind tests. Kling 3.0 owns the clearest official product surface. SkyReels V4 owns the strongest public architectural case for unified multimodal video-audio generation and editing. Those are three different forms of strength, and builders should keep them separate.

That is also why the most useful reading of this market is not “who won.” It is “which model reduces risk for my actual workflow.” For many teams in 2026, that answer will still be Kling 3.0 first, SkyReels V4 second, and HappyHorse-1.0 as the model to watch most closely. For frontier-focused teams with a higher tolerance for uncertainty, that order may flip.

Final recommendation

If I had to reduce this to one sentence for builders, it would be this: HappyHorse-1.0 is the model to watch, Kling 3.0 is the model to ship with first, and SkyReels V4 is the model to evaluate seriously if multimodal conditioning is central to your stack.

That framing is more useful than a flat winner-takes-all ranking because it acknowledges how these models actually enter teams. One wins attention. One wins operational confidence. One wins architectural ambition. Builders should choose based on which of those matters most right now.

FAQ

Is HappyHorse-1.0 the best AI video model right now?

HappyHorse-1.0 is the strongest publicly visible no-audio blind-preference leader right now, but that is not the same thing as being the best all-around production choice. Its public documentation and access story are still incomplete compared with Kling 3.0 and SkyReels V4.

Is Kling 3.0 better than SkyReels V4 for short-form content teams?

Kling 3.0 is easier to justify for many short-form teams because its official product surface is clearer. SkyReels V4 can be more attractive when multimodal conditioning and unified editing matter more than having the cleanest product packaging.

Which model is better for audio-first workflows?

On public evidence, Kling 3.0 and SkyReels V4 are the safer recommendations for audio-first work. Kling has the clearest official native-audio product story, while SkyReels V4 has the strongest public architectural case for joint audio-video generation.

Should builders wait before adopting HappyHorse-1.0?

Many teams probably should. If your workflow depends on stable access, documented pricing, and higher governance confidence, the current public record suggests waiting until HappyHorse’s access and documentation catch up with its leaderboard results.

Does SkyReels V4 matter even if it is not the leaderboard leader here?

Yes. SkyReels V4 matters because it has one of the clearest public descriptions of a unified multimodal video-audio system, and it still ranks strongly on current leaderboards. Builders evaluating medium-term architecture should not ignore it just because HappyHorse currently has the louder headline.

Where to Try HappyHorse-1.0: Verified Access Guide

See where HappyHorse-1.0 is publicly accessible today, which options are hosted workspaces, and what still lacks clean release-level verification.

By ruslana 一 Apr 08, 2026- AI Video

- AI Video Generator

- HappyHorse

_11zon.webp)

Is HappyHorse-1.0 Really Open Source? What We Can Verify

HappyHorse-1.0 tops Artificial Analysis, but its open-source release is harder to verify. Here is what is confirmed, claimed, and still missing.

By ruslana 一 Apr 08, 2026- AI Video

- AI Video Generator

- HappyHorse

Why HappyHorse-1.0 Is #1 on AI Video Leaderboards

HappyHorse-1.0 now leads key AI video rankings, but the real story is what the leaderboard measures, what remains unclear, and what creators should do next.

By ruslana 一 Apr 08, 2026- AI Video

- AI Video Generator

- HappyHorse

- X

- Youtube

- Discord