How to Keep Your Original Voice in AI Video Translation

- AI Translator

- AI Video Translator

If you translate videos with AI, you can keep part of your original voice. But you usually cannot keep all of it by default.

That is the key point. AI can copy parts of your voice identity, such as timbre, cadence, accent, and pronunciation. It can also help with lip-sync and fast multilingual publishing. But even the best tools still struggle with emotion transfer, pacing, idioms, proper nouns, and voice matching in some cases. That is why a translated video can sound like you on the surface, yet still not feel like you.

For most creators, the real goal is not perfect cloning. The real goal is this: when someone watches the Spanish, French, or Japanese version, it should still feel like the same creator, the same brand, and the same style of delivery. Based on the available evidence, that depends more on workflow and review than on the translation button itself.

Quick decision block

Use AI voice preservation when your content is clear, spoken, and structured, such as lessons, explainers, product demos, or spokesperson videos.

Use it more carefully for comedy, emotional storytelling, confession-style videos, or any format where timing and emotional weight matter more than speed.

Use subtitles or partial re-recording when the translated dub is accurate but no longer sounds like something you would actually say. That is especially relevant because YouTube says automatic dubbing does not fully transfer tone and emotion yet, and works better on content that does not rely heavily on expressiveness.

Can AI really keep your original voice?

Yes, partly.

ElevenLabs explains voice cloning as learning a representation of a voice, not reproducing the original recording itself. In plain English, that means AI can learn the shape of your voice, but it does not fully recreate the exact performance you gave in the source video. If the input audio is short, noisy, or compressed, the result gets worse.

That is why “same voice” and “same feeling” are not the same thing.

A good clone may sound close to your voiceprint. But your original voice in a real video is more than a voiceprint. It also includes your pauses, your emphasis, your sentence rhythm, and the way you soften or punch certain words. When those parts change, viewers notice it quickly, even if they cannot explain why. This is also why YouTube warns about voice matching, mispronunciations, pacing, and background-noise issues in automatic dubbing.

What “your original voice” really means

When creators say, “I want to keep my original voice,” they usually mean five things at once.

First, they want the sound of the voice to stay familiar.

Second, they want the rhythm to feel natural.

Third, they want the stress and emphasis to land in the right places.

Fourth, they want the emotion to survive the translation.

Fifth, they want the personality of the language to stay intact. That means the translated version should still sound like something this creator would say, not like a neutral corporate script.

Current tools can help with parts of that. HeyGen, for example, promotes voice preservation, lip-sync, subtitles, and glossary controls. ElevenLabs highlights preserved emotion, timing, tone, speaker separation, and editable transcripts in dubbing. Those features matter because they address different layers of “original voice,” not just one.

The mistake is thinking one feature solves all five layers.

Voice cloning helps with the first layer. Editable dubbing helps with the second and third. Human review helps with the fourth and fifth. If you skip those later steps, the result often becomes technically correct but personally flat. That is the stronger practical reading of the current product landscape.

Where AI translation loses your voice fastest

The first weak point is bad source audio.

If your original track has echo, noise, hard compression, or uneven microphone distance, the clone has less useful material to learn from. ElevenLabs says poor-quality, short, or noisy samples will degrade the clone. That one detail explains many disappointing results.

The second weak point is literal translation.

A sentence can be correct in meaning and still wrong in delivery. Maybe it is too long for the target language. Maybe the pause comes too late. Maybe the emphasis lands on the wrong word. That is why editable transcripts and segment regeneration matter so much. ElevenLabs explicitly offers manual transcript and translation edits, plus regenerated speech segments. HeyGen also emphasizes localization controls, brand glossary, protected terms, forced translations, and pronunciation control.

The third weak point is performance-heavy content.

YouTube says automatic dubbing currently does not transfer tone and emotion fully, and it works better on content that does not depend on expressiveness. That is a very important signal. If your video depends on sarcasm, tension, humor, confession, or dramatic timing, automatic dubbing may solve the language problem while creating a performance problem.

A simple workflow that keeps more of your voice

You do not need a complicated workflow. You need a careful one.

1. Start with cleaner source audio than you think you need

A clean voice track gives the model a better base. Remove noise if you can. Avoid over-compressed exports. If the source audio is weak, the translated dub will usually carry that weakness forward.

2. Pick the right level of control

If speed matters most, automatic dubbing may be enough.

If voice consistency matters more, use a workflow that lets you edit transcripts, fix terms, adjust pronunciation, and regenerate segments. That is where tools with editable dubbing are stronger. HeyGen highlights localization controls and glossary management. ElevenLabs highlights transcript editing, translation editing, speaker separation, background audio retention, and segment regeneration.

3. Edit the translation before you fix the voice

This is where many teams waste time.

If the translated script does not sound like something the creator would naturally say, changing the voice settings will not solve the real issue. Fix the wording first. Shorten long lines. Replace stiff phrases. Keep the creator’s usual level of directness, humor, or warmth. Then regenerate the audio. The public product docs strongly support this order, because both transcript editing and regeneration are treated as core dubbing controls.

4. Treat pauses and emphasis as separate problems

A translated dub can have the right words and still feel wrong because the pauses are off.

Check where sentences breathe. Check which word gets the punch. Check whether the lip-sync helps or distracts. This matters because HeyGen pushes lip-sync as a core part of its translator, while YouTube warns that fast speech can create an unlistenable dub.

5. Review with a target-language speaker before publishing

This is not optional if quality matters.

YouTube explicitly says you or someone who speaks the target language can review dubs before publication. That is one of the clearest signs that AI dubbing is still a draft-plus-review workflow, not a publish-blind workflow.

When subtitles or re-recording are the better choice

Sometimes the best way to keep your original voice is not to force AI dubbing at all.

Subtitles are often the better choice when the original performance is the product. That includes stand-up clips, emotional personal stories, highly expressive commentary, or anything where timing carries meaning. In those cases, the original audio may be more valuable than a translated dub. YouTube’s own guidance points in this direction by saying automatic dubbing works better on content that does not rely on expressiveness.

Partial re-recording can also be a smart middle ground.

You may keep AI dubbing for most of the video, then manually re-record a short hook, punchline, or emotional section. That often protects the part viewers remember most, while still saving time on the rest of the video. This is an editorial recommendation based on the documented limits above, not a company claim.

How current tools help in different ways

HeyGen is built around broad multilingual reach and presentation polish. Its translator page highlights 175+ languages and dialects, voice preservation, lip-sync, subtitles, brand glossary, forced translations, protected terms, and pronunciation controls. That makes it attractive when you want one workflow for multilingual creator or business content at scale.

ElevenLabs is stronger when you care about deeper voice control and post-generation editing. Its dubbing docs describe 32-language support, speaker separation, background-audio retention, editable transcripts and translations, and regenerated segments. Its voice-cloning docs are also unusually clear about the quality limits of the clone itself.

YouTube automatic dubbing is the low-friction option. It is built into the platform, and YouTube lets eligible creators review dubs before publishing. But it also has the clearest public warnings about limits, especially for expressive content, voice matching, pacing, idioms, and jargon.

So the practical choice is simple.

Choose the fastest path when reach matters most.

Choose the more editable path when brand voice matters most.

Choose subtitles or re-recording when the original performance matters most.

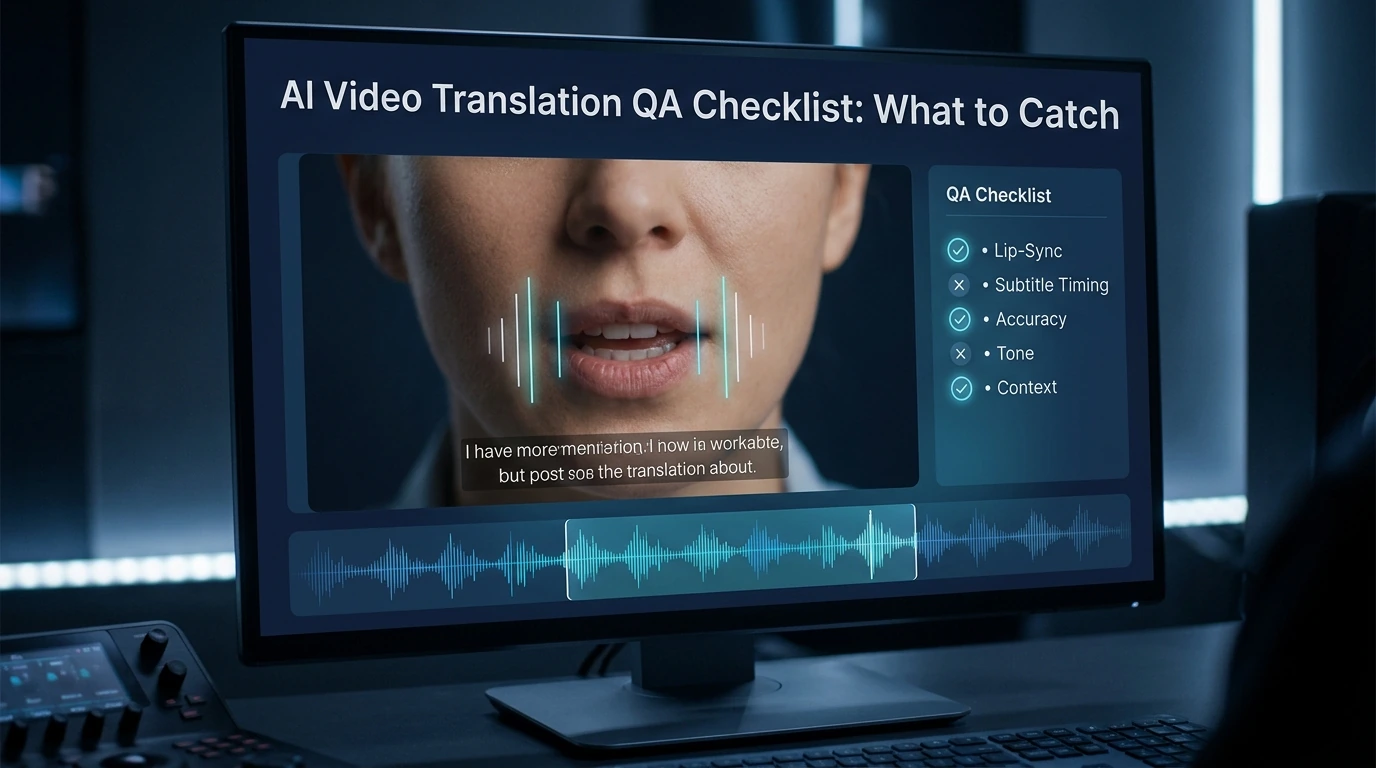

A short QA checklist before you publish

Ask these five questions before a translated dub goes live.

Does this still sound like the same person?

Not just the same tone color. The same person.

Would the creator really phrase it this way in the target language?

This catches literal translation problems fast.

Are the pauses and emphasis natural?

A small rhythm issue can make a good dub feel fake.

Did lip-sync improve the result or make it stranger?

Sometimes slight mismatch is less distracting than over-processed sync.

Would a native listener trust this version?

That is the final test. If the target-language listener says it feels stiff, the workflow is not done yet.

FAQ

Does AI dubbing really preserve your original voice?

AI dubbing can preserve part of your original voice, but not all of it. Current tools can keep elements like timbre, cadence, and some delivery patterns, but emotion, pacing, phrasing, and natural language personality often need extra editing and review.

Why does the translated audio sound like me but not feel like me?

Because voice identity is more than sound. Even if the clone matches your voiceprint, the translated version can still lose your pauses, emphasis, emotional timing, and natural phrasing in the new language.

How much source audio do I need for better voice preservation?

There is no single universal amount that guarantees a perfect result. But ElevenLabs clearly says short, poor-quality, or noisy samples reduce clone quality, so cleaner and stronger source material gives you a better starting point.

Is automatic dubbing enough for most YouTube creators?

It can be enough for clear, informational content. It is a weaker fit for videos that depend on strong emotion, humor, or performance, because YouTube says tone and emotion are not fully transferred yet and voice matching can still be an issue.

When should I use subtitles instead of voice cloning?

Use subtitles when the original performance is more important than full audio localization. That usually includes comedy, emotional storytelling, or any video where the original voice carries meaning that a dub may flatten.

Can I publish AI-translated dubs without review?

You can, but that is not the safer choice if quality matters. YouTube explicitly allows creators or target-language speakers to review dubs before publication, which reflects the current reality that AI dubbing still benefits from human QA.

How to Create a Multilingual Talking Avatar or Photo

Learn how to make a talking avatar or talking photo in multiple languages, choose the right workflow, and avoid lip-sync, voice, and subtitle mistakes.

By Kitty 一 Apr 17, 2026- AI Video

- AI Translator

- AI Video Translator

AI Video Translation QA Checklist: What to Catch

Catch lip-sync, timing, voice, and subtitle mistakes before you publish. A simple QA checklist for creators, marketers, and localization teams.

By Kitty 一 Apr 17, 2026- AI Video

- AI Translator

- AI Video Translator

How to Translate YouTube Videos with AI in 2026

Learn when to use subtitles, YouTube auto dubbing, translated metadata, and external AI tools to translate YouTube videos for a global audience.

By Kitty 一 Apr 17, 2026- AI Video

- AI Translator

- AI Video Translator

- X

- Youtube

- Discord